Confluent Kafka Docker Image

Ever feel like your digital life is a bit of a chaotic mess? Like your emails, social media notifications, and that important document you're sure you saved somewhere, are all vying for attention, occasionally tripping over each other? Yeah, me too. It's like a busy marketplace where everyone's shouting their wares, and you're just trying to find your favourite spice stall without getting lost. Well, imagine that marketplace, but for your applications. That's where something like Confluent Kafka Docker Image swoops in, like a super-organized town crier, making sure all the messages get to the right people, at the right time, without a single digital elbow being thrown.

Think of Kafka as this incredibly efficient, super-speedy postal service for your applications. Instead of your apps sending messages directly to each other, which can get messy real fast (imagine a bunch of neighbours yelling across their gardens, hoping the right person hears them), they send their messages to Kafka. Kafka then acts like a central post office, neatly sorting everything into different "topics" (think of these as different mailboxes for different types of mail – bills, birthday cards, junk mail!).

And this "Confluent Kafka Docker Image"? It's basically a pre-packaged, ready-to-go Kafka setup. Imagine you need to bake a cake. You could go out, buy flour, sugar, eggs, find a recipe, mix it all up, hope for the best. Or, you could buy a brilliant cake mix that has all the ingredients perfectly proportioned, with easy-to-follow instructions. That's what the Docker image is for Kafka. It takes all the fiddly bits, all the setup headaches, and bundles it up so you can get your Kafka postal service running with minimal fuss. It’s like having a Michelin-star chef deliver a perfectly prepared meal kit straight to your door.

Why would you even want a super-speedy postal service for your apps? Well, let's get real. In today's world, applications don't just sit around doing one thing. They're constantly talking to each other. Your online shopping cart needs to talk to the payment processor, which then needs to talk to the inventory system, which then needs to tell the shipping department. If any of those conversations get lost, or if one app is just a bit slow to respond, the whole chain can break. It’s like trying to play a game of telephone with a bunch of toddlers – by the end, nobody knows what's going on.

Kafka, and by extension the Confluent Kafka Docker image, brings order to this chaos. It acts as a central nervous system for your applications. It decouples them, meaning your shopping cart app doesn't need to know exactly how to talk to the payment processor. It just needs to know how to send a message to Kafka. The payment processor (or any other app interested in that message) can then subscribe to that specific "topic" and pick up the message whenever it's ready. It's like having a reliable inbox where all your important communications land, neatly organized, ready for you to deal with at your own pace. No more frantically searching for that missed message!

Confluent, the company behind this nifty Docker image, really understands this. They've taken the open-source Apache Kafka and added a whole bunch of extra goodies. Think of Apache Kafka as the really good, classic recipe for a chocolate cake. Confluent takes that recipe and adds a sprinkle of magic, maybe some fancy frosting, and a handy serving suggestion. They make Kafka easier to manage, more secure, and add features that make it even more powerful. It’s like going from a basic smartphone to the latest model with all the bells and whistles – same core function, but a much smoother, richer experience.

So, how does this Docker thing actually work, you ask? Docker is basically a way to package applications and their dependencies into a standardized unit called a container. Think of a container like a self-contained little apartment for your application. It has its own electricity, its own plumbing, its own furniture – everything it needs to run perfectly, without interfering with any other apartments in the building. And the beauty of it is, if it works in one apartment, it'll work in any apartment that has a Docker engine. This means you can set up Kafka on your laptop, on a server in your office, or on a giant cloud server, and it'll behave exactly the same way. No more "it works on my machine!" excuses.

Using the Confluent Kafka Docker image means you don't have to spend hours wrestling with installation scripts, fiddling with configuration files that look like they were written in ancient Sanskrit, or praying to the server gods that you've got all the dependencies in the right place. You just pull the image, and poof – you've got a Kafka cluster ready to go. It’s like ordering a pizza: you don't need to know how to knead dough or manage a wood-fired oven. You just want a delicious pizza, and the pizza place delivers. The Docker image is your pizza delivery service for Kafka.

And what kind of "applications" are we even talking about here? Oh, pretty much anything that generates data or needs to react to something happening. Think of your website. Every click, every purchase, every user action is a piece of data. Instead of your website trying to process all of that in real-time (which can slow it down like a snail trying to cross a highway), it can just send those events to Kafka. Then, other services can pick up those events and do interesting things with them. Maybe one service updates your user dashboard, another sends you a personalized recommendation email, and a third logs it for later analysis. All happening smoothly in the background, thanks to Kafka.

Or imagine you're running a fleet of delivery trucks. Each truck is constantly sending its GPS location. Instead of having one central server trying to keep track of every single truck all at once (which is like trying to herd cats during a laser light show), each truck can send its location updates to Kafka. Then, your mapping service can subscribe to that "location" topic, your dispatch system can subscribe to it to see if anyone's running late, and your analytics team can grab the data to optimize routes for the future. It's all about decoupling and letting different systems do their job without being overwhelmed.

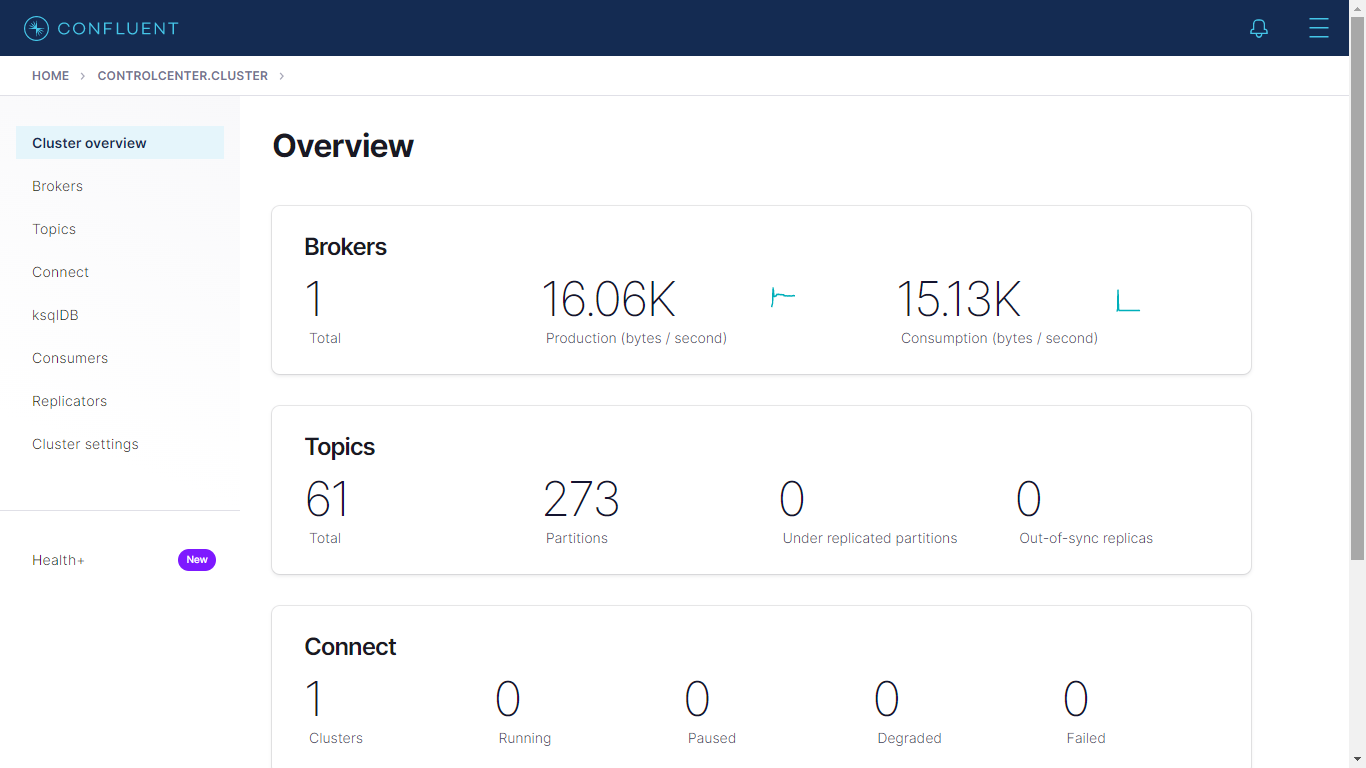

The confluent platform, which the Docker image is part of, is like a whole toolkit for building these data pipelines. It's not just Kafka; it's also tools for managing your Kafka topics, for moving data in and out of Kafka, and for understanding what's going on. It’s like getting a whole workshop with all the best tools, not just a hammer. And this Docker image gives you a fantastic way to start playing with that workshop.

Let's talk about scaling. Imagine your little lemonade stand suddenly becomes the hottest spot in town. All these new customers are showing up, wanting lemonade. If your setup isn't ready, you're going to have long lines, grumpy customers, and maybe even spilled lemonade. Kafka, especially when managed by Confluent and deployed via Docker, is built to handle that kind of growth. You can add more "brokers" (think of these as more cashiers or lemonade dispensers) to your Kafka cluster, and it just keeps on chugging along. The Docker image makes it relatively straightforward to spin up more instances of Kafka as your needs grow.

It's also pretty robust. You know how sometimes you're trying to download a big file, and your internet connection drops? If it's not designed well, you lose everything and have to start again. Kafka is built with durability in mind. Messages are stored persistently, and if one part of your Kafka setup has a little hiccup, other parts can keep things running. It’s like having multiple backup generators for your critical systems. This means your applications can be a bit more resilient, a bit less prone to the dreaded "oops, something went wrong" messages.

For developers, this is a dream. Instead of spending days setting up complex distributed systems, they can get a functional Kafka environment up and running in minutes with the Docker image. This means more time spent building cool features and less time wrestling with infrastructure. It’s like a chef who gets perfectly prepped ingredients from a sous chef – they can focus on the artistry of cooking. And because it's in a Docker container, it's isolated. You can experiment, make a mess (digitally, of course!), and if it all goes sideways, you can just toss the container and start fresh, without affecting your main system. It’s like a sandbox for your data pipeline experiments.

Think about the sheer volume of data generated every second. Emails, social media posts, sensor readings, financial transactions, video streams – it's a deluge! Trying to process all of this with traditional methods is like trying to drink from a firehose. Kafka, with its distributed nature and high throughput, is designed to handle these massive streams of data. It can ingest and distribute information at incredible speeds, acting as a buffer and a conduit for all that digital noise. It’s the ultimate digital dam, controlling and directing the flow of information.

The Confluent Kafka Docker image often comes with some helpful additions. For example, they might include tools like Kafka Connect, which makes it super easy to get data into and out of Kafka from all sorts of sources – databases, cloud storage, other messaging systems. Imagine you have all your customer data in an old-school spreadsheet, and you want to feed it into your new real-time analytics system. Kafka Connect, often bundled or easily integrated with the Confluent Docker image, can act as a bridge, smoothly transferring that data. It’s like having a universal adapter for all your digital devices.

So, in a nutshell, if you've got applications that need to communicate reliably and at scale, and you don't want to spend your life configuring servers, the Confluent Kafka Docker Image is your best friend. It’s the easy button for getting a powerful, distributed messaging system up and running. It’s like having a pre-built, high-performance engine for your data, ready to plug into your application's chassis. It lets you focus on what your applications do, rather than how they talk to each other. It brings a little bit of order, a lot of speed, and a whole lot less headache to your digital world. And who doesn't need a little more of that?"