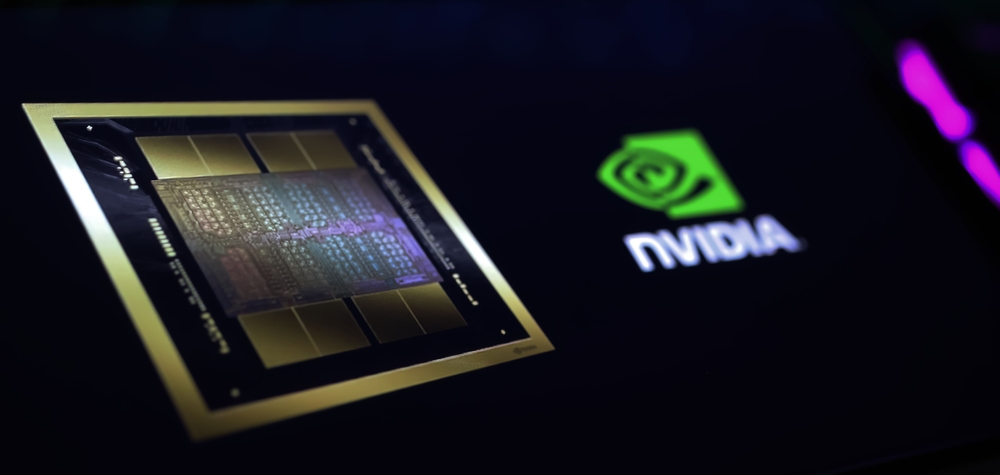

Nvidia's Blackwell Ai Chips And Server Racks Are Reportedly Overheating.: Complete Guide & Key Details

Alright, folks, gather 'round for a tale from the digital frontier, a story that involves more heat than your grandma's oven on Thanksgiving Day. We're talking about Nvidia's shiny new Blackwell AI chips and the massive server racks that house them. Now, you might be thinking, "What's a Blackwell chip got to do with me? I'm just trying to stream my cat videos without buffering!" Well, settle in, because these super-brains are the engine behind a lot of the cool AI stuff you're starting to see, from smarter voice assistants to those algorithms that somehow know you're thinking about buying a new spatula. And apparently, these powerful engines are running a little… toasty.

Imagine this: you've just bought the most cutting-edge gaming PC on the market. It's sleek, it's powerful, and it promises to render virtual worlds with breathtaking realism. You boot it up, fire up your favorite game, and within minutes, your room feels like a sauna. The fans are screaming like they're auditioning for a heavy metal band, and you're starting to wonder if you accidentally bought a personal space heater instead of a computer. That, my friends, is a mild, everyday version of what's reportedly happening with Nvidia's Blackwell chips.

These aren't just any chips; they're the rockstars of the AI world right now. Nvidia, the undisputed king of graphics cards for a while, has pivoted hard into the AI game, and Blackwell is their latest masterpiece. Think of it as the difference between your old flip phone and the latest smartphone. Blackwell is designed to crunch through unimaginable amounts of data, learning and predicting with lightning speed. It's the kind of power that makes your laptop feel like a calculator from the 80s.

But here's the kicker: all that brainpower comes at a price. And in this case, the price seems to be a bit of an internal meltdown. Reports are trickling in, like that awkward email you get from IT about mandatory software updates, that these high-performance Blackwell chips, when packed into those colossal server racks, are generating a lot of heat. We're talking about temperatures that would make a desert cactus sweat.

The Sweat Lodge of the Future: Why Are These Chips Getting So Hot?

So, why the sudden heatwave in the data centers? It's not like they're playing a particularly intense game of Minesweeper. The answer, in true tech fashion, is a combination of things. Firstly, these Blackwell chips are insanely powerful. They’re designed to do things that were science fiction a decade ago. This level of computational grunt means they're drawing a serious amount of electricity, and as any electrical engineer (or your uncle who's obsessed with power bills) will tell you, more power often means more heat.

Think of it like a really, really fast car. A little economy car gets you from A to B, and it doesn't guzzle gas or get super hot. But a Formula 1 car? That thing is a beast, designed for maximum performance. It needs massive engines, special cooling systems, and it definitely generates a lot of heat when it's pushing its limits. Blackwell chips are the Formula 1 cars of the AI world.

Secondly, it’s about density. These server racks aren't just a few computers in a closet. They are colossal, monolithic beasts. We're talking about rows upon rows of these chips, all crammed together, working tirelessly. Imagine trying to cram a whole marching band into a Smart Car. It’s going to get crowded, and it’s going to get warm. The sheer number of these power-hungry chips packed into a tight space means the heat generated is amplified.

And then there's the issue of cooling infrastructure. Data centers are usually designed with sophisticated cooling systems. They have massive air conditioners, chilled water pipes, and airflow management that would make a wind tunnel look like a gentle breeze. But even the best cooling systems have their limits. If the heat being generated is even more than the system is designed to handle, things start to… well, boil over, metaphorically speaking.

It's like having a tiny apartment with a very enthusiastic roommate who loves to cook elaborate meals. Even with the windows open, the heat from the kitchen can quickly make the whole place feel like a tropical rainforest. Nvidia's Blackwell chips, in their current setup, might be that enthusiastic roommate, and the data center is the tiny apartment.

The Anecdotal Evidence: "Is My AI Assistant Sweating?"

Now, you won't see your chatbots spontaneously developing sweat patches, at least not yet. The overheating issue is primarily happening within the specialized, industrial-grade server environments of big tech companies and research institutions. These are the places that are buying these racks in bulk to train massive AI models, the kind that will eventually power your future gadgets.

But the implications are definitely reaching the everyday user. When these chips overheat, it's not just a minor inconvenience. It can lead to performance throttling. That's a fancy way of saying the chips have to slow down to avoid frying themselves. Think of it like your phone getting hot during a long call and then suddenly slowing down to save battery. It’s the same principle, but on a much, much grander scale.

Imagine your favorite AI-powered app suddenly becoming sluggish. Or that AI chatbot you’re using for homework help starts taking ages to respond. While there could be many reasons for that, a potential bottleneck in the underlying hardware – like overheated chips – could be a contributing factor. It’s like trying to get a crucial package delivered, but the delivery truck broke down on the highway and had to be towed. The delivery gets delayed, and you’re left waiting.

It can also lead to reduced lifespan for the components. Running hot for extended periods is like pulling an all-nighter every single day. Eventually, you're going to wear out. For these incredibly expensive pieces of technology, that's a big problem. Companies are investing billions of dollars in AI infrastructure, and they want those servers to hum along for as long as possible.

Think of it like a vintage car. If you drive it like it’s meant to win a race every single day, without proper maintenance and cooling, it’s going to break down much faster than if you drive it sensibly. These Blackwell chips are the bleeding edge, and pushing them to their absolute limit, all the time, is a recipe for potential trouble.

The "Complete Guide" to What This Means for You (Sort Of)

Okay, so you're probably not going to feel the heat directly radiating from your local data center. But the ripple effects can be felt. Here's a breakdown of the key details and what it all boils down to:

1. The Nvidia Blackwell Chip: A Mini Supercomputer

These are Nvidia’s latest generation of AI accelerators. They're built on a new architecture designed for massive parallel processing, meaning they can do tons of calculations at the same time. This is crucial for training and running complex AI models.

- What it does: Powers advanced AI tasks like natural language processing, computer vision, and recommendation engines.

- Why it’s a big deal: It's a significant leap forward in AI processing power, setting new benchmarks.

- The downside: It’s an absolute power hog.

2. The Server Racks: The Batcave of AI

These aren't your office server closets. We're talking about specialized racks designed to house dozens, if not hundreds, of these powerful GPUs. They're built for maximum density, packing as much computing power into a small space as possible.

- What they are: Industrial-scale units housing multiple Blackwell chips.

- Why they’re used: To create massive AI computing clusters.

- The problem: Packing so much power in one place creates a concentrated heat problem.

3. The Overheating Issue: More Than Just a Warm Feeling

Reports suggest that under heavy load, the Blackwell chips and their surrounding server infrastructure are hitting temperatures that are pushing or exceeding optimal operating limits. This isn't just a "slightly warm to the touch" scenario.

- What’s happening: Chips are generating excessive heat that current cooling solutions are struggling to dissipate effectively.

- Consequences: Performance throttling, potential component degradation, and increased energy consumption for cooling.

- Analogy: Like trying to cool a superheated engine with a tiny fan.

4. Nvidia's Response (or Lack Thereof… Yet)

Naturally, a company like Nvidia doesn't just let its flagship products overheat without a plan. They're likely already working on solutions. This could involve software optimizations, firmware updates, or even recommending specific cooling configurations for their customers.

- What they’re likely doing: Investigating the reports, developing software fixes, and providing guidance on optimal cooling.

- What you might see: Future software updates that manage chip performance more efficiently.

- What you won't see: A public recall of server racks, more likely a series of technical advisories.

5. The Impact on the AI Revolution

This might sound like a niche problem, but it's actually quite significant. The pace of AI development is directly tied to the availability of powerful computing hardware. If these chips can't operate at their peak efficiency due to heat, it could slow down the training of the next generation of AI models.

- The bigger picture: Overheating can be a bottleneck for AI development and deployment.

- Your future AI: Slower training times could mean your favorite AI features take longer to arrive or improve.

- The long game: Companies will need to invest even more in advanced cooling technologies to fully leverage these powerful chips.

The Cool Down: What's Next?

The good news is that the tech world is pretty good at solving problems, especially when billions of dollars are on the line. Nvidia is undoubtedly looking at this issue with the intensity of a hawk. They'll be working on making these chips run more efficiently, or at least providing clearer guidelines on how to cool them effectively.

We might see new cooling technologies emerge, like advanced liquid cooling systems becoming standard. It’s not as simple as just pointing a fan at it. These are sophisticated systems that require precise engineering.

Think of it like this: you’ve got a super-powered coffee maker that brews the most amazing coffee, but it also generates a ridiculous amount of steam. You wouldn't just leave it on your wooden counter and hope for the best. You'd get a special heat-resistant mat, maybe some ventilation. It's the same principle, but with a lot more silicon and a lot more watts.

So, while the idea of AI chips overheating might sound a little dramatic, it’s a very real engineering challenge. It’s a sign that we’re pushing the boundaries of what’s possible, and sometimes, when you push hard enough, things get a little hot under the collar. For now, let’s just be thankful our own laptops aren't quite at that fever pitch. Yet.