What Is American Standard Code For Information Interchange

Hey there, my tech-savvy (or soon-to-be tech-savvy!) friend! Ever wondered how your computer, that magical box of blinking lights and endless cat videos, actually talks to itself? Or more importantly, how it talks to other computers, devices, and even your trusty old printer that’s probably older than your favorite pair of jeans?

Well, buckle up, buttercup, because we're about to dive into the wonderfully weird and surprisingly important world of something called ASCII. Now, I know what you're thinking. "ASCII? Sounds like a fancy new energy drink or a brand of artisanal cheese." And honestly, I wouldn't blame you! It’s not exactly the most glamorous name in the tech universe, is it? But trust me, this little acronym is the unsung hero of our digital lives. Think of it as the secret handshake of computers.

So, what exactly is this mysterious ASCII thing? The full, glorious name is the American Standard Code for Information Interchange. Phew! Just saying that out loud probably burned a few calories. But let's break it down, shall we? It’s essentially a character encoding standard.

Now, I can see you tilting your head. "Character encoding? What in the digital realm does that mean?" Imagine you have a bunch of LEGO bricks, right? Each brick is a different shape and color, and you can put them together to build anything you want. ASCII is kind of like that, but for letters, numbers, and symbols.

Computers, at their core, are pretty simple creatures. They understand electrical signals, basically just "on" or "off" states. Think of it like a light switch. It's either on or off. There's no "sort of on" or "a little bit off." This "on" and "off" can be represented by numbers, specifically the binary numbers 0 and 1. So, everything your computer does, every picture you see, every song you stream, is ultimately just a massive, mind-boggling sequence of 0s and 1s.

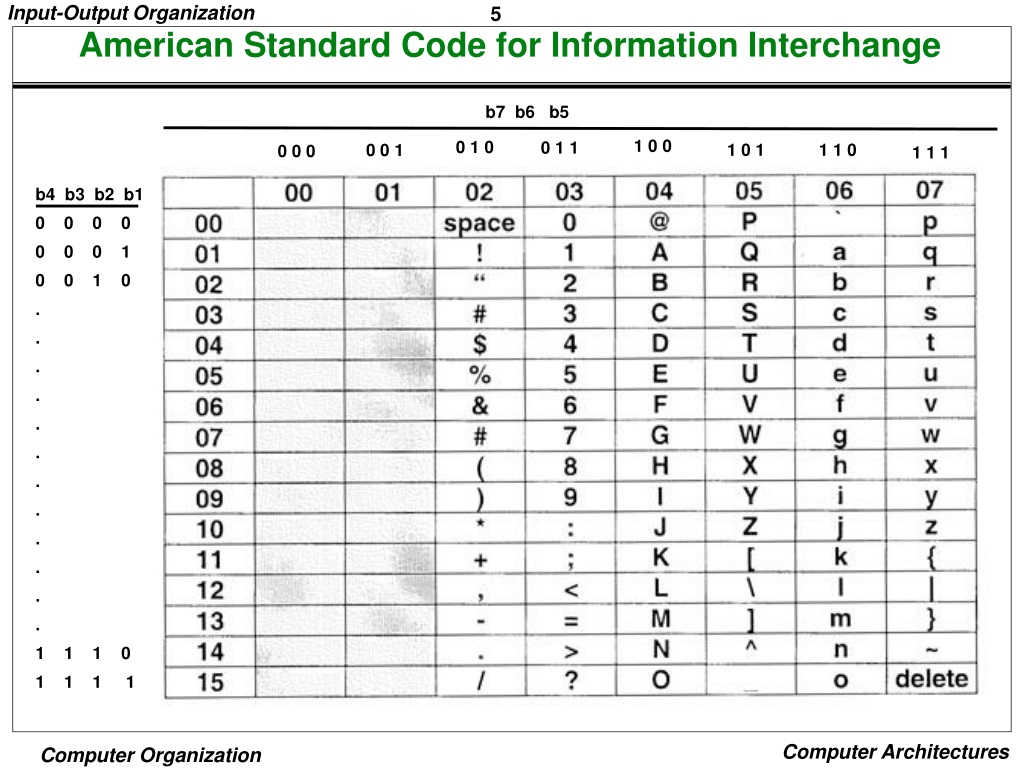

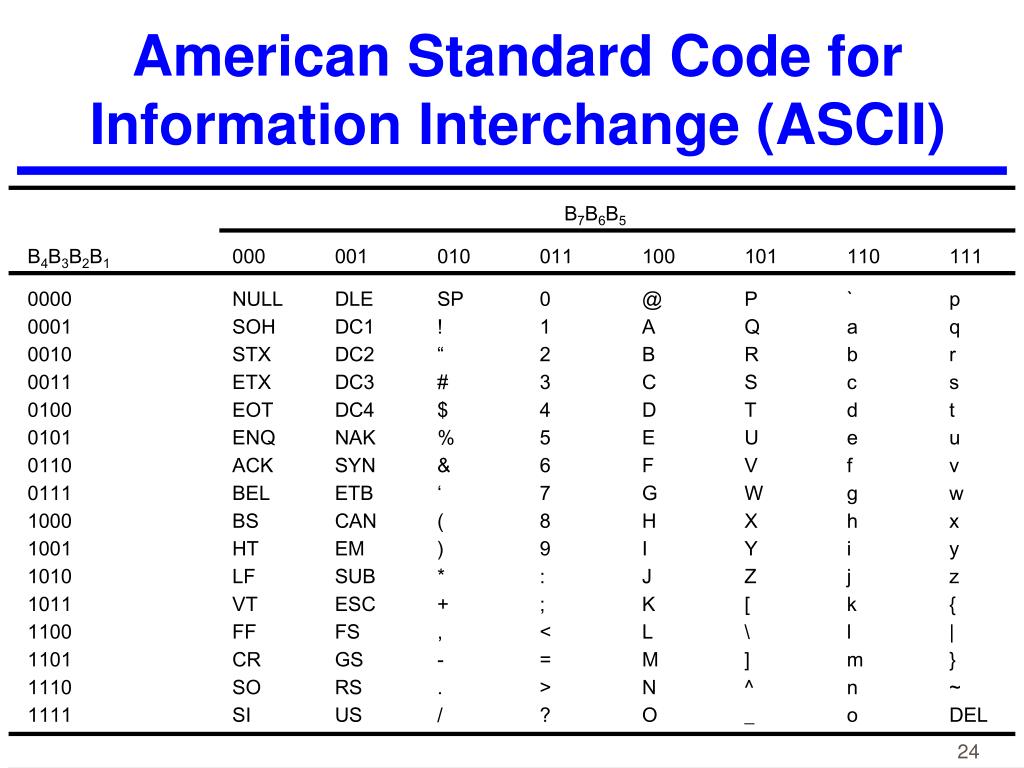

But how do you get from a bunch of 0s and 1s to the letter "A," or the number "7," or that smiley face emoji you love to spam your friends with? That’s where ASCII steps in like a digital superhero. ASCII assigns a unique numerical code to each letter of the English alphabet (both uppercase and lowercase), digits 0-9, various punctuation marks, and some control characters (which we’ll get to later, don’t you worry your pretty little head about them just yet).

Think of it as a dictionary for computers. When you type the letter "H" on your keyboard, your computer doesn't magically know what "H" looks like. Instead, your keyboard sends a signal that ASCII interprets as a specific number. For the uppercase "H," that number is 72. So, internally, your computer is just chugging along with the number 72, not the letter "H" as we see it.

And then, when it's time to display that "H" on your screen, the computer looks up the number 72 in its ASCII chart (which is a part of its programming, of course!) and draws the corresponding shape of the letter "H." Pretty neat, huh? It's like a secret code that makes our digital world understandable.

Originally, ASCII was developed in the early 1960s. Yes, that's right, it's been around the block a few times! It was initially designed for teleprinters, those clunky machines that used to send text messages over telegraph lines. Imagine sending a message and having it printed out on a long roll of paper at the other end. Old school cool!

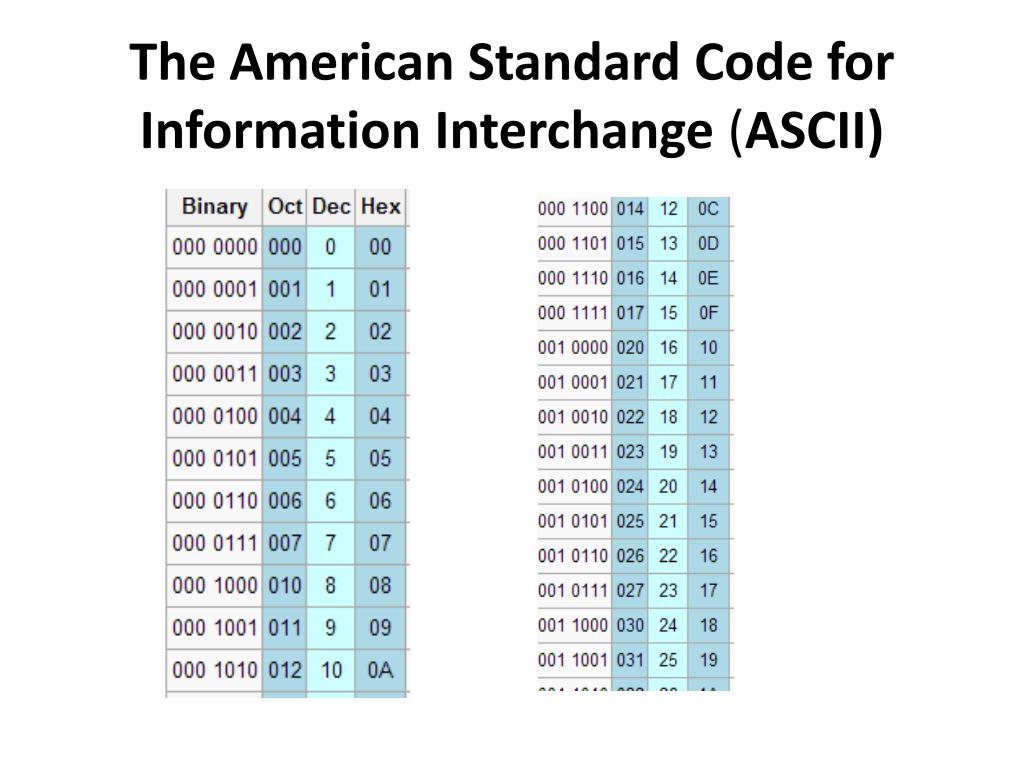

The first version of ASCII used 7 bits to represent each character. Now, a bit is like a tiny little storage unit for a 0 or a 1. So, with 7 bits, you can have 2 raised to the power of 7 different combinations. That’s 2 x 2 x 2 x 2 x 2 x 2 x 2, which equals 128 possible characters.

This might sound like a lot, but think about it. You have: * Uppercase letters (A-Z) - 26 characters * Lowercase letters (a-z) - 26 characters * Numbers (0-9) - 10 characters * Punctuation marks (like !, ?, ., ,, etc.) - roughly 32 characters * And then some special "control characters" (we’ll touch on these briefly later, I promise!) that are used for things like telling the printer to move to the next line or to signal the end of a transmission.

So, 128 characters was just enough to cover all the essentials for basic English communication. It was a pretty big deal back then!

You might be wondering, "Why 'American Standard'?" Well, at the time, there weren't many other competing standards, and this one, developed by the American Standards Association (now known as ANSI – the same folks behind those annoying ANSI art graphics you sometimes see in text files), became the de facto standard. It was like the cool kid on the block that everyone else decided to copy.

The standard ASCII character set can be broadly divided into two parts: the printable characters and the control characters.

The printable characters are the ones we see and interact with every day: the letters, numbers, and punctuation marks. These are the building blocks of our conversations, our documents, and pretty much everything we type. When you see a character on your screen, chances are it's being represented by its ASCII code.

The control characters are a bit more… well, controlling! They don't usually display anything on the screen. Instead, they're like invisible instructions for devices. Some common ones include: * Newline (NL): This tells the computer to move to the next line. Think of it as hitting the "Enter" key. * Carriage Return (CR): Historically, this told the printer to move the print head back to the beginning of the line. On some systems, it’s used with Newline. * Tab (HT): This indents your text, like when you're formatting a document. * Backspace (BS): This deletes the previous character. Oops, made a typo? Backspace to the rescue! * Escape (ESC): This is a fun one. It's often used to signal the start of an escape sequence, which is a series of characters that have a special meaning. Think of it as a "get out of jail free" card for certain commands.

These control characters were super important for making sure that text was displayed and processed correctly on different types of devices. They were the silent conductors of the early digital orchestra.

Now, here's where things get a little more interesting, and a little less American-centric. The original 7-bit ASCII had its limitations. What about characters from other languages? What about fancy symbols? What about, you know, emojis?

This is where the concept of extended ASCII comes into play. Since computers typically work with bytes (which are 8 bits), there was an extra bit available. This extra bit could be used to create another set of characters, bringing the total to 256. This allowed for more characters, including accented letters for European languages, some Greek letters, and a few other symbols.

However, here's the catch: there wasn't just one standard for extended ASCII. Different companies and operating systems came up with their own versions! This led to a lot of confusion. Imagine sending a document to someone, and because they had a different "extended ASCII" setup, all the fancy characters turned into gibberish. It was like trying to have a conversation with someone who keeps mixing up their words – frustrating, to say the least!

This is why, as the internet grew and the world became more interconnected, we needed a more robust solution. Enter Unicode. Unicode is the modern-day, global standard that aims to represent every character from every writing system in the world, plus a whole lot more. Think of it as ASCII's super-powered, multilingual, international cousin who has traveled the world and learned every language.

While ASCII is still very much alive and well, especially for basic English text and in many programming contexts where simplicity and efficiency are key, Unicode is what powers the vast majority of modern computing. When you see an emoji, a Chinese character, or an Arabic script, you're looking at something encoded in Unicode.

But don't underestimate ASCII just yet! It’s the foundation upon which so much of our digital world was built. It’s the bedrock. It’s the classic car that, while maybe not as feature-packed as a modern SUV, has a certain timeless charm and reliability. Many programming languages still rely heavily on ASCII for basic operations and data handling. So, while Unicode might be the grand palace, ASCII is the sturdy, well-built foundation it stands on.

Think about plain text files, like `.txt` files. These are often encoded using ASCII. Why? Because they are meant to be universally compatible and simple. No fancy formatting, just pure text. This makes them incredibly useful for configuration files, logs, and simple notes. They're the digital equivalent of a blank piece of paper.

The beauty of ASCII lies in its simplicity and its universality within its scope. It provided a common language for machines to understand and exchange text. Before ASCII, different computer manufacturers had their own proprietary ways of representing characters, making it incredibly difficult to share information between systems. ASCII broke down those barriers.

It's like when you're traveling and you learn a few basic phrases in the local language. Even if you can't have a deep philosophical discussion, you can order food, ask for directions, and generally get by. ASCII did that for computers.

So, next time you’re typing an email, writing code, or even just admiring a really well-formatted text-based artwork, take a moment to appreciate the humble American Standard Code for Information Interchange. It’s the silent, invisible force that allows us to communicate, create, and connect in the digital realm.

It’s a testament to ingenuity, a clever solution to a fundamental problem, and a crucial stepping stone in the evolution of computing. From its humble beginnings with teleprinters to its ongoing role in modern systems, ASCII has truly shaped the way we interact with technology. So, let's give a round of applause (virtually, of course!) for this foundational hero of the digital age. It’s proof that sometimes, the simplest codes can lead to the most amazing connections!